Menu

Parents have raised concerns about social media for years—and now the courts are starting to agree. In this episode, we’re joined by Tori Hirsch of the National Center on Sexual Exploitation (NCOSE) to break down two major cases and what they mean for families.

Parents have raised concerns about social media for years—and now the courts are starting to agree. In this episode, we’re joined by Tori Hirsch of the National Center on Sexual Exploitation (NCOSE) to break down two major cases and what they mean for families.

Tori Hirsch serves as legal counsel at the National Center on Sexual Exploitation Law Center. She engages in civil litigation against mainstream sexual exploiters, including some of the world’s largest tech companies, and specializes in reviewing and drafting legislation to prevent sexual exploitation, including online sexual abuse of children and imaged-based sexual abuse. Much of her legislative focus is on preventing childhood exposure to pornography and reforming state laws regarding commercial sexual exploitation. Tori is a graduate from Regent University School of Law and is licensed to practice law in Maryland and New Hampshire.

Transcription is done by an AI software. While technology is an incredible tool to automate this process, there will be misspellings and typos that might accompany it. Please keep that in mind as you work through it.

SPEAKER_02

Welcome to the Next Talk Podcast. We are a nonprofit passionate about keeping kids safe online. We’re learning together how to navigate tech, culture, and faith with our kids. We have Tori Hirsch back on the podcast today to talk about two landmark verdicts. Tori, thank you for being back.

SPEAKER_01

Thank you for having me back. I’m excited to talk about these verdicts today.

SPEAKER_02

If people didn’t listen to your other show, can you kind of give a description of who you are, what organization you’re with? Tell us your background.

SPEAKER_01

Yeah, absolutely. My name is Tori Hirsch. I’m legal counsel with the National Center on Sexual Exploitation. We’re a nonpartisan, nonprofit organization based out of Washington, D.C. And our mission really is to reduce sexual exploitation in all of its forms. And, you know, we want to see people live in a world where they can live and love without the fear of exploitation.

SPEAKER_02

Nikosi is what we call it for short, NCOSE. They do great work and they’re on the front lines really with the legislative work, tackling big tech issues and all the stuff. And so we love partnering with them, learning from them. And Tori’s been, like I said, on the podcast before. So, Tori, I want to dive in. There’s there’s two landmark verdicts that happened recently, one in New Mexico and one in California. And if you could break each one of them down for us, um, because social media platforms were found guilty, were found liable. And so I want you to break that down in terms of so so us non-legal people can understand this in a way. So why don’t we start with the New Mexico wing?

SPEAKER_01

I guess I can just give an overview of both of them because they both deal with Meta as a defendant. Um, so the New Mexico lawsuit to begin with is a case where the New Mexico Attorney General filed suit against Meta for violating their consumer protection laws. So they really brought a number of claims kind of under their state consumer protection law, basically alleging that Meta misled users about the safety of their platforms. And this includes Instagram, Facebook, kind of all of the brands underneath Meta, and that they enabled child sexual exploitation on those platforms. So they accused Meta of allowing predators access to minors, connecting predators with victims, and that there’s also a piece of these claims that deal with the design of the platforms. So they said that the design basically allowed predators to maximize engagement, and also that infinite scroll features that were integral to the actual design of the platform fostered addictive behavior. So that’s another piece of it as well. It’s sort of the child exploitation piece, connecting predators with victims. And then also the design of the platforms was addictive and led to mental health harms.

SPEAKER_02

And this was a New Mexico jury that that found them liable for$375 million. Like this was a big verdict.

SPEAKER_01

Yes, it was a very big verdict. This case is a huge victory. It’s the first time a social media company has been, you know, brought before the judge and the jury and actually gotten through the trial, and there’s a real verdict at the end of it. So the jury did find that there were 75,000 violations of the consumer protection law. And the the state statute says it’s, you know, a maximum of a$5,000 penalty per violation. And so with those numbers combined, that’s how you get to the$375 million verdict.

SPEAKER_02

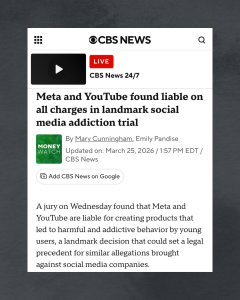

Okay. And then let’s switch to California and tell us about that verdict because it was also from a jury.

SPEAKER_01

Yes, also a jury verdict. Uh, again, found Meta liable. This was a bigger case, so this also involved Google and Google platforms, which include YouTube. Initially, this case also um, you know, was suing Snapchat or Snap is the parent company and TikTok. Those two both settled before trial, but then Meta and Google actually went to trial, and you know, the jury found them liable for these, um for these claims for social media addiction under products liability. Um and so this case was called a bellwether case or a bellwether trial, and it represented 1,600 victims, which normally a case has, you know, a few. Sometimes there’s class actions that can have a few thousand, but having 1,600 victims is hugely significant. And basically what this case was to do was to sort of do a temperature check to see how are these cases as they’re brought going to end up or going to go. Now, obviously, you have a different jury for every case. So I think it was 10 out of the 12 or 9 out of the 12 did find meta liable in this case. But um, it’s really just a temperature check to see moving forward how these cases are going to go. So the claims in this case, like I said, were for social media addiction and yeah, brought under the products liability theory.

SPEAKER_02

I think this sends a signal because these came down within the same week. I think it sends a signal as to like the public opinion as to how we’re looking at social media now. I mean, don’t you? It’s it’s like these were our peers that found that listened to all the evidence and found these companies liable.

SPEAKER_01

Yeah, absolutely. I think it’s sending a huge signal. And for those of us on the advocacy side of, you know, kind of what role does big tech play in society? I think it’s a huge encouragement to see that finally these tech giants are going to face the law and are going to be brought to account for the harms that they cause. Um, you know, we’re expecting that companies make a safe product. And that is required for every industry in the United States right now, except for big tech. For so many years, we’ve allowed big tech to just get away with what they’re doing because you couldn’t, you literally could not sue them because of Section 230 of the Communications Decency Act. It was very difficult to get to the point that we’re at now. And because of the strategy of bringing these cases under products liability, which is saying, you know, has nothing to do with the actual content or the speech on the platforms, but how these platforms were actually designed to operate, designed to addict and designed to harm kids. Like now we’re finally saying, which, you know, advocates and organizations like Nicole have been saying for years, they’re not designed with minors’ interests in mind. And now we’re finally seeing that, you know, a jury of our peers agrees that these were intentionally designed to addict and harm children. And now they’re finally having to pay in a small part for that. But definitely I think it signals the tide is turning and it’s turning in the direction of like listen to parents and children and families who are trying to deal with the harms and struggles of our increasingly online world and sort of what those realities mean as kids engage online.

SPEAKER_02

Okay, I want to unpack that a little bit because that was a lot of great information. So section 230 is an old law on the books, but it’s really not applicable anymore because of how tech has developed. Is that correct?

SPEAKER_01

Um, that’s not exactly correct. Okay, okay. Social or section 230 of the Communications Decency Act is a law that was passed in 1996 at the beginning of the internet. So basically, tech was developing. The internet was still a baby, and they came to Congress and said, listen, we’re going to not be able to exist because of we’re afraid of potential lawsuits. So we need some protection, good Samaritan protection for, you know, blocking and screening of offensive content. And also we shouldn’t be able to be sued for what a third party posts. So that has sort of been the bubble in which tech, which used to be little tech and it’s now big tech, was operating. They would bring a section 230 defense for any time they were sued, whether it was, you know, because of something that somebody posted on their platform, or honestly, in cases like this, they still brought Section 230 defenses, basically saying they’re not liable for anything that a third party posts. And, you know, they would also make the argument that, like, oh, the because of the way that the design of the platform works, that includes like third-party content. So basically, they’ve been protected for years and years and years. And it still does apply. It’s still a good law. They’re still using it left and right for every case that’s brought against them as a defense. And these two were some of maybe the first cases that were the 230 defense was not applicable and they weren’t successfully able to use it, if that makes sense.

SPEAKER_02

Yes. Okay, that’s a great explanation. And now help me understand this. You know, we had you on before talking about the Kids Online Safety Act. That is supposed to kind of fill the gaps and in and uh fix Section 230, correct? But we’re that’s not passed yet.

SPEAKER_01

So it it wouldn’t directly fix Section 230 because it’s not amending section 230. So section 230 would still exist. But the idea and the brilliance behind COSA, the Kids Online Safety Act, specifically the duty of care, is that it would require tech platforms to have a minimum level of care for minor users, basically saying you need to design your platform with the safety of minor users in mind. So it would have a substantial effect on how these platforms are run and how they operate at the design level, but it wouldn’t necessarily touch Section 230. It wouldn’t touch speech either. It would literally just resolve the problems that we’re seeing with the way the platforms are designed to foster addictive behavior and things like that.

SPEAKER_02

So these two state jury verdicts that we just unpacked here, they didn’t use, I mean, obviously they were under consumer law in the state. So it made it about product liability and not, and that’s why the um defense of section 230 did not come into play here, correct?

SPEAKER_01

That’s right. That’s right. So Meta still argued that they had a 230 defense, but the judge basically disagreed with them and said, no, this doesn’t fall under section 230. So we’re gonna proceed with the case. So they tried to raise it as a defense. Um and they argued that because of, you know, the way the algorithm and platform are designed, that it’s publishing content that would implicate user-generated content, and therefore they would be able to say they have a 230 defense because that deals with user, user-generated, you know, third-party content. But that wasn’t a successful argument. So they are gonna they are gonna appeal, which I assume they’ll continue to raise, you know, 230 in that regard. But at this time, yeah, they weren’t successful with that argument.

SPEAKER_02

How long do you think the appeal process will take? Like what are the next steps on what you think is gonna happen here?

SPEAKER_01

Yeah, I I don’t really know how long. I mean, the trial itself, the the California, the LA social media trials lasted for eight weeks, which is a very long time, which means there was tons of evidence. Um, I think as far as like California’s, you know, civil procedure laws, I’m not sure how much time, but there is definitely, you know, an amount of time in which they have to appeal. And then, you know, appeals can run for for years sometimes. It it really just depends. But Meta seems, you know, motivated to turn this around and um, you know, have a an appeals judge reverse. So I’m not really sure exactly how much time, but definitely something to be watching for.

SPEAKER_02

Okay. And I want to talk to you a little bit more about the product liability aspect of this. So I’ve heard it compared to like the tobacco industry. Tell me your hot take on that.

SPEAKER_01

Yeah, I think that is really an excellent comparison. Um, I know during the tobacco industry trials of decades ago, you know, these tobacco companies were kind of operating in a similar space to today’s social media companies. Children were being harmed. There was, you know, I think some rhetoric about smoking is harmful, tobacco is harmful, but there wasn’t any, you know, hard evidence being brought in a court of law showing that. And so when these trials happened, it really kind of was a snowball effect of, you know, certain members of the public knew this to be true or had been harmed by it. And then it became a nationwide, we’re all in agreement, tobacco’s harmful, smoking is harmful, especially as to children, and tobacco shouldn’t be able to be marketed to kids. So I think in a similar vein, we see a lot of harms from social media. You know, parents were there who have lost a child because of social media harms, um, you know, from platforms, and they’re, you know, kind of raising the alarm. And so now this is sort of hopefully, you know, the first, the tide is turning snowball effect towards positive change.

SPEAKER_02

I think that’s a really fair assessment there to compare it to the tobacco industry with product liability and what we’re seeing with these lawsuits. Like they’re actually talking about the design of how it’s created, the product, correct? Can you speak a little bit into that as to as to the verdicts and what what we learned from that?

SPEAKER_01

Sure. I can highlight some of the evidence that was um uncovered during the trial, if that would be helpful. I think that speaks directly to the design of the platforms. So there were tons and tons of internal communications, whether it’s emails or just, you know, chats between executives and employees about their features. And, you know, that’s what people were working on design features of the platform, new things, parental controls that would be rolled out. And one of the things that was highlighted at the trial was that executives knew that the features and products were dangerous, but they still chose to roll them out anyways. And so even communications like executives saying that Instagram is a drug and we’re pushers. There was evidence from experts that said the motion of scrolling and the infinite scroll that is so ubiquitous now on so many of these platforms, like reels, that motion is similar to pulling a slot machine when gambling. And it has the same like dopamine reaction in your head as it does for someone who’s out of casino gambling. So, like, this is what’s being put on our kids. Um, so that was just a few pieces of evidence, like literal internal chats between executives, also internal surveys that Meta took themselves. And there was one survey surveying people who had had bad experiences or encounters. Um, and I think they surveyed around 200,000 people. And it showed that teens were consistently having bad experiences on Instagram and being, you know, solicited for sexual acts or just accosted by strangers and, you know, people trying to groom them or just having negative experiences. And that survey that Meta took themselves was just buried and ignored. And there were no changes made to try to alleviate those dangers. Um, also, another thing was that the safety team at Meta was put under the growth team. So a huge problem with that is the safety team and the growth team have two different goals, one would think. So, you know, the safety team, hopefully, their main job was to protect users and especially children and make sure they were safe online. But the growth team, their main job is to hook teens and keep them online for as long as possible. And so the question really became, you know, for the safety team is is something safe? Is this new feature, this new design product or design that we’re trying to create and implement on the platform, is it safe? The question really became oh, is this going to stifle growth and going to stifle teens using the platform? And so the integration of those teams is something, you know, anyone could see extremely problematic.

SPEAKER_02

Wow, I did not know that. That’s a huge red flag. You know, I’ve seen arguments online uh of, well, where are the parents? The parents have a role to play. And I get really irritated about that because, you know, we’ve been in this space for a long time, and especially Next Talk, like we don’t even focus on the legislative aspect because there’s so many great organizations like you guys doing all of this work. So we we really hone in on parent-child relationship and how to have the dialogue at home. Like that’s our mission, right? Is to make sure you know the online dangers, you’re communicating with your kid, and your child feels safe with you. That’s the that’s the mission of next talk. And um, and and so I get irritated because I feel like there’s so much work being done in this space. And parents, many of them, and I know there’s absent parents, and I know some parents are not are not in it, but even more so for those kids, we need protective measures in place, right? And so to speak into that, because I’m really passionate about, you know, it’s gonna take a minute for all these laws to catch up with what’s happening, and we’re losing children in the process. So parents truly are the first line of defense right now. Like the the home is the first line of defense. And so that’s why we pour all of our time and attention into that. But can you speak into that? That that argument of, well, where are you parents?

SPEAKER_01

This is your responsibility. Absolutely. I think that whole frame of mind and sort of that rhetoric is big tech trying to shirk responsibility for something that parents have no control over. Parental tools in in the Google evidence that came out, you know, against Google, they found that most of the parental tools that were in place were literally a PR stunt, that they were advertised and toted as a huge victory. But in reality, when parents went on and tried to use these tools, they were difficult to find, they were impossible to actually use, they had tons of loopholes, and they weren’t, you know, the amazing parental tools that they were marketed to be. And so even when these companies are trying to say, like, we’ve done such an amazing thing by rolling out these great parental tools, they really weren’t as effective as they were said that they were supposed to be. And another thing, too, is that many of the parents who are parent survivors who have lost a child to social media in one form or another, those were involved parents. Those were parents who did everything right, who used the parental controls, who, you know, knew the platforms and knew what platforms their kids were on, and they still couldn’t do enough to prevent these harms from reaching their children. And so that’s one thing I would say is like, what does big tech have to say to those parents who are using all of those, you know, amazing design features for parental controls, and it still wasn’t enough to protect their child? Like that, there’s a disconnect, and big tech is trying to say that they have no responsibility whatsoever. Now, I don’t agree that all of the responsibility should be on big tech, but when they’re purposefully designing their platforms to be addictive, to be harmful, to lock kids in, like parents can’t fight against that. So it’s it’s a real challenge, and it really needs to be parents who are involved, like parents who can talk to their children about these things, like you’ve said, and big tech creating a safe platform to begin with.

SPEAKER_02

It takes, it takes all of it working together. And I’m so glad that you that you worded it like that. You know, we’ve had parent survivors on our podcast, and that is one of the things that we say literally in our presentations. Like, we know these parents who have lost kids. They were involved, they were having communication, they were doing all the things, and big tech failed them. It failed them in a way that is so I just can’t even believe that it’s it’s where the messaging is out there. You know, the teen accounts that they wrote out, they did this big marketing push. You saw all the big influencers on Instagram got paid to push the teen accounts. And um, and and the teen accounts were not helpful. Like we we tried them. And and so again, it’s a default in what you’re promising to protect with no warnings. You know, it goes back to the to the um tobacco, I think, product liability. There’s no warning, there’s no safeguards in place, there’s no age restrictions. You know, we’ve seen we’ve seen porn sites, gambling sites, all with this age restriction stuff. Nothing is on social media. I I think you’re right with this, like they’re the you you said at the beginning of the podcast they’re they’re really the only kind of product out there that ha that isn’t being found live.

SPEAKER_01

Right, right. And yeah, going back to what you said about Instagram rolling out their teen accounts, in Union Station in Washington, DC, there were ads plastered everywhere saying how amazing Instagram teen accounts were. Meanwhile, I know that Meta literally from the internal documents, which you can see, there’s a website called Tech Oversight Project that did an incredible job of posting updates and evidence from the LA social media trial. So would highly recommend for viewers who want more information on that. That’s an amazing resource. But I know from those internal documents that privacy for teens, defaulting to privacy for teens for Instagram could have been launched months earlier than it actually was. And employees at Meta complained when TikTok launched default privacy for teens. They complained that TikTok was getting all the praise for doing it first when Meta could have done it months ago, but chose not to. So it’s things like that that really make me question and should make everybody question like, what are their actual motivations? Like clearly, child safety is not a priority.

SPEAKER_02

Well, and I know we’re we’re getting frustrated here about the messaging and stuff, and we have a right to be, but but we also need to stop and celebrate these landmark wins because it is a, I think, turn of the tide in how the public is seeing social media.

SPEAKER_01

I’m just excited to see, you know, what comes of this. The Bellwether trial, there’s going to be two more trials that are sort of related to the same things, some slightly different takes, slightly different um allegations, I guess I should say. But there’s more coming. Like this is really just the start of, like you said, the tide turning.

SPEAKER_02

What are what are some that we can watch for? Are there certain states, certain, certain lawsuits that are coming next that we should be aware of?

SPEAKER_01

Yeah. Um, again, at the trial level, there’s two more bellwether trials. I think the next one is going to be this July in the same court as the last one. So it won’t have the same jury, but again, the same, same place. There’s also, well, Nikosi has a case right now where petitioning for cert to the Supreme Court. The case is DOES versus Twitter. Um, it’s not a social media addiction case, but it does deal with Twitter having direct knowledge that they were in possession of child sexual abuse material and profiting from that through advertising revenue. They had direct knowledge of that and they reviewed the content and basically told our John Doe’s that they didn’t find a violation of their policies. So that has been, you know, up and down uh in the Ninth Circuit. But we’re very hopeful the Supreme Court hasn’t taken up cert yet, but we’re very hopeful that it will. The plaintiffs are so brave and just so courageous to continue this fight up to the Supreme Court. Um, so that’s definitely a case to watch out for. And then the Social Media Victims Law Center, who has done an incredible job with these social media harms cases. Um, any case that they’re involved in, I’m sure they have, you know, posts about it on their on their website, but definitely following them as well.

SPEAKER_02

Well, as a mom over here, you know, just trying to keep my own kids safe and helping other parents, I thank you for the work and advocacy you’re doing uh for the fight. And um, I am hopeful. I am hopeful that we’re gonna have laws in place soon that help come alongside me as the parent so that I can be involved and still have all the conversations, but also have the law backing up to make sure that we are putting out a product that truly is safe for kids and all the safeguards that can be in place to protect children are in place.

SPEAKER_01

Absolutely. I think these cases are a huge win for just, you know, having a case move forward. But like you said, laws are extremely important as well. Um, we need practical federal solutions like the Kids Online Safety Act and other, you know, state-level bills too that have greater protections um for children as well.

SPEAKER_02

Most of our audiences are that we’re parents, just just regular parents, Tori. Is there anything else that you would like to say to them from a legal standpoint, from an attorney, from an advocate, from an advocate group that is helping, you know, to protect kids?

SPEAKER_01

Yeah, I would just say thank you for your care and interest in these issues. Thank you for taking the time to be an involved parent and you know, learn about these platforms and learn about parental safety and kind of what you can do on your end. Um, as far as we go, we love working with parents. We have lots of opportunities. If you want to talk to your legislator about a bill that involves child safety that you’re passionate about, um, we do that all the time. We have so many advocates and partners in every state. Um, and it’s super important for legislators to hear directly from their constituents. So if you’re passionate about these issues, you can connect with us. Um, yeah, that would be an amazing way to even get more involved if that’s something you’re interested in. It’s not scary, you know, we give you notes on points to hit on what to say, but they really want to hear about your experience as a parent in this age, as a parent navigating technology and what your fears and concerns are and what, you know, Congress or your local state government or state legislature should do about it. So there’s plenty of ways to get involved and just yeah, thank you.

SPEAKER_02

Awesome. I love that. So if a parent is listening and they’re like, this is my lane, like I want to get involved on the legislative side, should they go to somewhere specific on their on your website?

SPEAKER_01

So you can email us at public at ncos.com. So that will go straight to our inbox and we can, you know, sort you to the the right people to get in contact with from there.

SPEAKER_02

I would just say I think it’s okay if they say, hey, we heard you on the Next Talk podcast, we heard Tori, we want to get involved as a parent in legislative work, something like that, just something real simple.

SPEAKER_01

If parents want to go to our website, uh, that’s another great place to get involved. Our email is on there too, and tons of resources and information. We have an incredible blog that posts daily on updates on these issues and other things involving sexual exploitation, not just these big tech trials.

SPEAKER_02

Awesome. Thank you so much, Tori, again for all the work you’re doing and for taking the time out of your busy schedule to come on the show and kind of unpack this for us in a way that we can understand. Well, thank you for having me.

SPEAKER_00

Next Talk is a 501c3 nonprofit keeping kids safe online. To support our work, make a donation at next talk.org. Next talk resources are not intended to replace the advice of a trained healthcare or legal professional, or to diagnose, treat, or otherwise render expert advice regarding any type of medical, psychological, legal, financial, or other problem. You are advised to consult a qualified expert for your personal treatment plan.

This podcast is not intended to replace the advice of a trained healthcare or legal professional, or to diagnose, treat, or otherwise render expert advice regarding any type of medical, psychological, or legal problem. Listeners are advised to consult a qualified expert for treatment.

Subscribe to our monthly newsletter for all the latest podcast titles, events, and nextTalk news.

nextTalk started in a church with a group of parents who were overwhelmed that their young children were being exposed to sexualized content. Today, we’re a nonprofit organization in the state of Texas and an approved 501(C)(3) entity by the Internal Revenue Service.